My courses, my interests

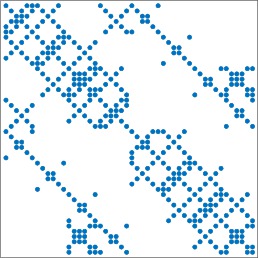

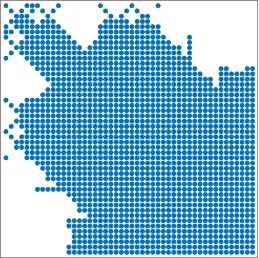

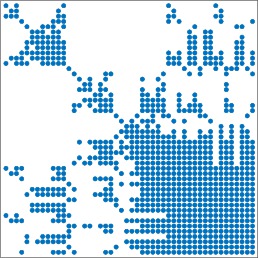

Most of my courses target efficient computations in computational linear algebra. Solving large systems of linear equations are often a focal point of many efforts of modern computational science. And, of course, mathematical formulation of this task is inseparably connected not only to numerical analysis and numerical algorithms, but also to theoretical background and algorithms of computer science. While an important item used in this field is that of a matrix, in order to solve problems efficiently one needs to consider matrices not as black boxes, but looking into their structure. Roughly said, we need to exploit matrix sparsity, as indicated in the following figures.

Once we try to do this, we enter a completely new world. In order to understand i, we need not only to use concepts of graph theory and other tools of computer science, but also understand, at least roughly, development and trends related to computer architectures. The two course I am involved in are about this.

Overall, a significantly more emphasis is devoted to algorithms . In order to construct new computational algorithms, we need to get understanding. And the main stress is put exactly to get understanding, believing that students have obtained a sufficient theoretical background in other courses.

The first of my courses devotes to direct methods targeted to solve large and sparse systems of linear algebraic equations. It can be considered as an introduction showing the role of the classical, graph-based sparsity. The second course targets parallel matrix-based computations. This course is shared with my great colleague Jaroslav Hron. While Jaroslav show how to do real parallel computations (based on coding in Python using unix-based environment), my focus is to show that parallel computations are something that is inevitable and should be considered. Either in order to develop new algorithms running on modern computer architectures, or taking the computer features into account in modifying implementations. My position here is to present a general overview and not to get stuck with details of particular computers, programming patterns, programming tools since they are in the process of ongoing changes.